The other day I was marveling at the amount of completion badges and learning opportunities in the tech world as I looked over one of the social media professional networks. A lot of the education has become more focused and usable. Also more accessible. Proprietary products seem to be shed and fall by the wayside since no one can learn to use them unless they are using them at a high license cost.

Usefulness is a big question. Many of us have limited time and we need as much useful information condensed into as little time as possible. I have this same conundrum with my workouts; I love to cycle but comparatively a run will usually condense more heart activity in a shorter amount of time thus more bang for the buck. Also, most companies in my experience do not pay for education so maintaining your skillset becomes another extra-curricular time drain. Picking out what to learn is an art in of itself. What I try to do is focus on my own interests that will down the road make me more knowledgeable and useful and build on what I already know. For instance, Linux. As a developer I can find may way around Linux quite well but I have many knowledge gaps so learning a bit about kernel architecture or security might help out with programming business applications. But also, I cannot (for myself) expect expert-level knowledge from a class or certification without real world hands on hands-on.

Merit matters. Merit in the sense of the real-world application of learning. Using the training in conjunction with design and implementation on a real world solution is optimum. Many times I’ve seen the first page Google iteration go to production. Personally I have only done this type of thing for proof of concept, but as we all know POCs often become Production.

When I see the badges I often wonder if there is any merit behind them. For instance if I see an Office 365 administrator learning badge on someone who is a manager, I may know that they have this knowledge, but can they use it? And did were employees offered the same opportunity of education or was this something of a training them via the privilege of a position that has no hands on or insight into detailed use case implementation. What is the real merit, the badge? And if you remember, in Agile a certification was anathema. Now everywhere are Agile certifications yet, I hadn’t really seen much change in it at all in well over a decade. It’s always dragging behind the implementation tools, always more dashboards.

As for collaboration; with the badges comes a gamification and competition. I don’t know about you, but I don’t like to go to work day in and day out feeling like I am in some sort of competition with my coworkers or being rated on how well I use Teams or Slack. Meetings can descend into the skill of one-upsmanship. Tiresome. Whereas in a classroom with grades there would be competition just not so transparent and it’s all a net knowledge gain, now it’s all out in everyone’s face. Clicking through a badge-process might be more important than the knowledge in the social setting. But then where is the responsibility of having a badge that proves a person has won it, versus being useful in the skill. When code is rolled, bad code affects others’ lives in extremely negative ways: unnecessary overtime, nights and weekends as well as missed life opportunities. Sometimes this competition of collaboration exposes the withholding of information in order to be more competitive.

Being honest, I wonder if this brave new world of badges and visibility are creating a community of Campbell’s Law coworkers.

Constant productivity tracking and e-collaboration and proof of knowledge fixtures available seem to be uncharted territory for measuring real merit or having real collaboration. I think I am just going to ride this one out as I want to retain the joy of creation and for me this can sap that joy; but we’ll see. In the meantime my strategy is continued:

- Outside interest in open source projects. Keep something for yourself and it will contribute.

- Practices via certs or kata. It seems that the big companies are offering free or discounted certifications and training. They have to be seen as probably contributional, but more likely for the joy of learning and building to the future. It’s unlikely your company will pay for it so make sure you want to do it and it contributes.

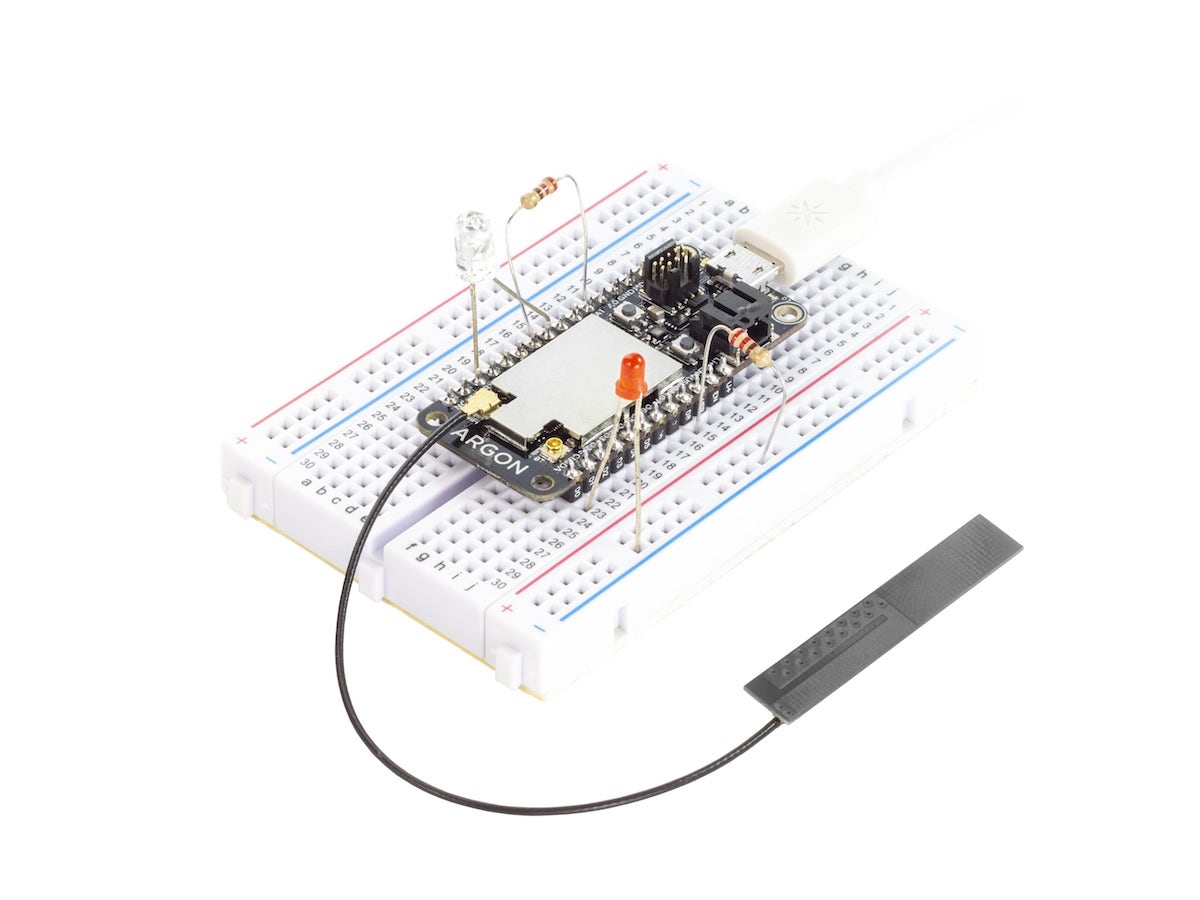

- Merit – via trying to do something real. Building something at home helps.

- Collaboration: never give up helping out others. Yeah, probably you will get no credit for some things you do but actually collaborating on something will give you insights you cannot get on your own. Fight your mind lock.

I was reading through some Code Newbie tweets and was quite impressed with the enthusiasm of potential developers who had little, if any, work experience. Well I’ve got some news for them. Know that stress you are having at finding your first job, doing your first job, doing any job?

I was reading through some Code Newbie tweets and was quite impressed with the enthusiasm of potential developers who had little, if any, work experience. Well I’ve got some news for them. Know that stress you are having at finding your first job, doing your first job, doing any job?