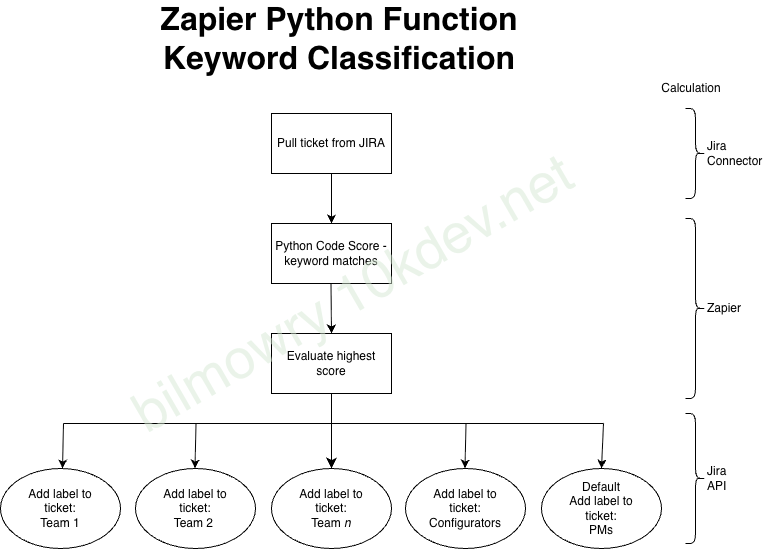

This is about hooking up Amazon Q to GitHub, so it can code review your pull requests. Also, to see what happens if you have an unsupported language reviewed (Groovy, and for supported looking at Java).

List of Q supported code review languages as of today is here:

https://docs.aws.amazon.com/amazonq/latest/qdeveloper-ug/q-language-ide-support.html

Here is a printout of the receipt:

20250828 Supported languages for Amazon Q Developer in the IDE – Amazon Q Developer

Language support for code reviews with /review

Amazon Q can create code reviews and provide automatic code fixes for files and projects written in the following languages:

- Java ‐ Java 17 and earlier

- JavaScript ‐ ECMAScript 2021 and earlier

- Python ‐ Python 3.11 and earlier, within the Python 3 series

- C# ‐ All versions (.NET 6.0 and later recommended)

- TypeScript ‐ All versions

- Ruby ‐ Ruby 2.7 and 3.2

- Go ‐ Go 1.18

- C ‐ C11 and earlier

- C++ ‐ C++17 and earlier

- PHP ‐ PHP 8.2 and earlier

- Kotlin ‐ Kotlin 2.0.0 and earlier

- Scala ‐ Scala 3.2.2 and earlier

- JSX ‐ React 17 and earlier

- Infrastructure as Code (IaC) languages

- AWS CloudFormation ‐ 2010-09-09

- Terraform ‐ 1.6.2 and earlier

- AWS CDK ‐ TypeScript and Python

Also, in the list if the current supported languages is Java, but not Groovy

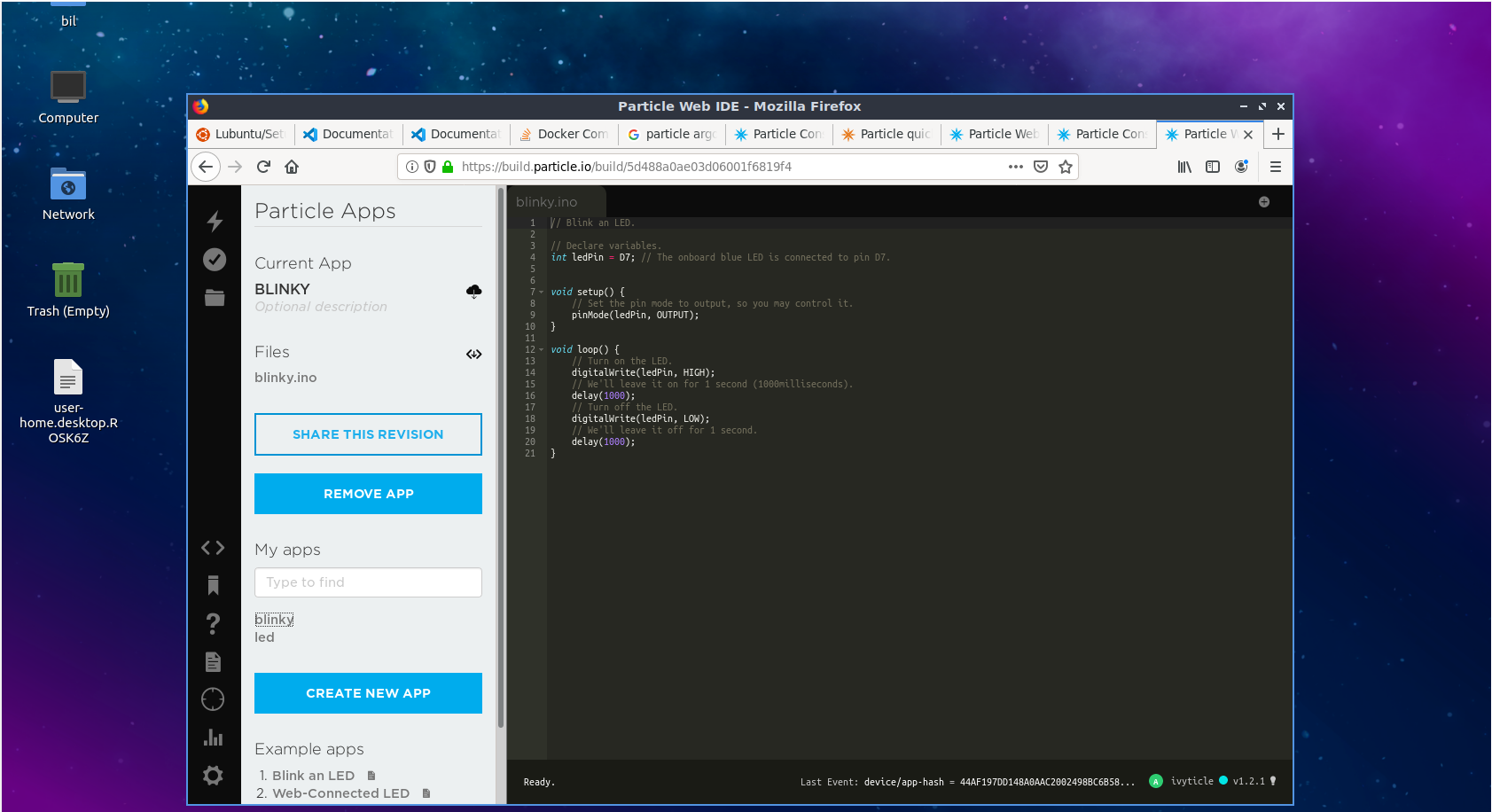

First, you will need a GitHub account, a repository, and an Amazon Q Account.

Add the Amazon Q App to your GitHub account:

Go to settings (menu under your profile), then Applications:

Visit the Github Marketplace in Applications and install Amazon Q Developer:

When you install, it should be for Free. It asks for a billing address — but don’t need a card or anything. You can also choose all or just particular repositories for Q access.

Q App should now be installed in your GitHub.

Next connect the GitHub in Amazon Q Developer. Log in and go to Amazon Q Developer, then click into the GitHub portion to Register Installations.

Authorize Q, then Register. You’ll get a confirmation.

Now everything is connected, and it’s time to do a code review.

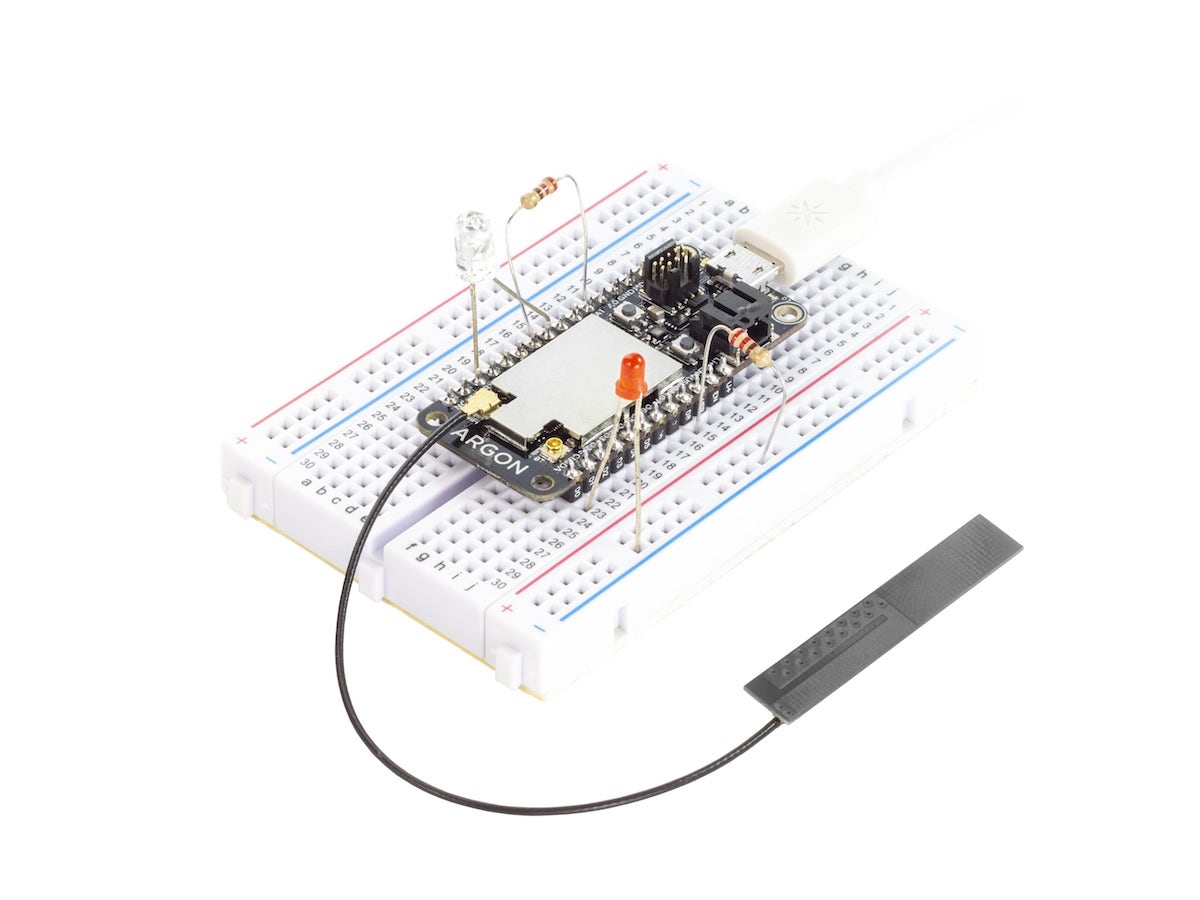

You can go out to an AI (Gemini in this case) and generate some bad code test files. I generated two — one for Groovy, and one for Java. SOme of the project I am on now is Groovy and the Amazon reps said it would work even though as of this date Groovy is not a supported language for Q.

The prompts:

- can you generate a Groovy file that has security and syntax issues so that I can test my code review process

- generate this same thing but as a java file

Here are the groovy and java test files to look at (printed as pdf):

badcode.groovy badcode.java

These files have errors in them, which AWS should catch. I am not going to do an in-depthstudy of which errors it might see, just if the review works, and decorates, the code in GitHub or not.

Isn’t AI great? you don’t even need to hire a real developer to get bad code.

Here is a list of things that are wrong in these files, from Gemini.

Bad Groovy:

Security Issues

- Command Injection: The

insecureMethod() concatenates user input directly into a shell command, which can allow an attacker to execute arbitrary commands.

- SQL Injection: The

vulnerableSql() method builds a SQL query string by concatenating data, leaving it open to SQL injection attacks.

- Directory Traversal: The

insecureFileAccess() method uses user input to access a file path without proper validation, which could allow an attacker to read sensitive files outside of the intended directory.

Syntax and Style Issues

- Missing Semicolon: In Groovy, while semicolons are optional, their absence can sometimes lead to unexpected behavior, especially when combining multiple statements on a single line. The provided example shows a simple case where a missing semicolon could be a point of review.

- Misspelled Keyword: The

reutrn keyword is misspelled, which will cause a compilation error.

- Unmatched Parenthesis: A parenthesis is missing in the

println statement, which will also cause a compilation error.

- Redundant Comparison: The

if (flag == true) statement is redundant. A more concise and idiomatic Groovy style would be if (flag).

- Unused Variable: The

unusedVariable is declared but never used, which is a common code smell that a good code review should identify.

Bad Java:

Security Issues

- Command Injection: The

insecureMethod() concatenates user input into a shell command using Runtime.getRuntime().exec(). This is a classic command injection vulnerability, as an attacker could provide input that executes malicious commands on the system.

- SQL Injection: The

vulnerableSql() method builds a SQL query string by directly concatenating an int and String into the query. This leaves the application open to SQL injection attacks, where an attacker could manipulate the query to gain unauthorized access to data.

- Directory Traversal: The

insecureFileAccess() method uses a user-controlled path to create a File object. Without proper validation, this is a directory traversal vulnerability, allowing an attacker to access sensitive files outside the intended directory, such as /etc/passwd.

Syntax and Style Issues

- Compiler Errors: Unlike Groovy, Java is a strictly typed and compiled language. Missing semicolons, misspelled keywords (

reutrn), and unmatched parentheses will cause the program to fail to compile, which is a major red flag in a code review.

- Redundant Comparison: The condition

flag == true is redundant and considered bad practice in Java. A more idiomatic and concise style is simply if (flag). This is a common style issue that a good code review should catch.

- Unused Variable: The

unusedVariable is declared but never used. Most IDEs and compilers will issue a warning about this, as it indicates potentially dead code or a mistake. This is also a key code smell to identify during a review.

In my repository I have two branches, one for groovy and one for java, I’ll pull separate PRs and then wait for Q’s code review.

Java PR:

Groovy PR:

Q is thinking about the review:

It takes a little time and here are the results for Java, which is finds many issues (more than listed here):

But with Groovy, an unsupported language, finds nothing:

Conclusion

It works rather well for a supported language, almost as good as my experience with SonarQube decoration. If you are working in a low quality environment, you could turn this on and force PRs and get good code reviews for free at point of merge.

As for the Groovy/unsupported — be careful as Amazon might write off your code base and say “be patient.” Doesn’t matter how cheap a tool is if it sucks time. I have found the Groovy support kind of OK in the IDE with Q, just, the review doesn’t work.