I’ve tried my hardest over the years to simplify things, but tools are tools. A developer’s existence is tools. When I think about it, sometimes the paradigm is like a good cook where less is more; for instance some people like using 1 IDE for everything — SQL, Java, Javascript. Although I do cook like that (a good knife does almost everything for me) I kind of stopped doing this as long as the tools are lightweight. For instance, I can’t use the Springsource Tools version of Eclipse it is too heavyweight and interferes with my other plugins I like to have many times. In that case, I am more like a mechanic where a specialty tool brings home the bacon.

Also, I always have Windows and Linux around even at home. So here’s what I’m working with now.

Two Development Boxes

- Fedora 19 with Gnome 3 (one Swing project)

- Windows 7 (three projects, web services)

On the Fedora 19 Machine

IDEs

- IntelliJ 13 Community – is my primary development environment. I need the superior search utilities because the Swing project is massive, at least 1/2 million lines of code.

- Eclipse Juno – because the rest of the team is using that; I have to keep the environments up to snuff.

- Gedit

Languages

- Several JDKs 6 & 7, 32 and 64 bit. I run the Swing app in 32 bit (per requriements) and run the IDE’s in 64 bit.

Tools

- Maven

Repository

- Git

- GitG – for a gui. I always eyeball my stuff before checkin.

- SVN

Data

- MySQL and Workbench

Network

- Terminal

- VNC

Servers

- JBoss 4.3.0. Yep.

- Apache

Browsers

- Midori

- Chrome

- Firefox

Office

- Open Office

On the Windows 7 Machine

IDEs

- Eclipse Juno for one project

- Eclipse Kepler for the other projects.

- Notepad++

Languages

- Java — same thing with the multiple JDKs. Plus, Windows is nasty about its own installation.

- Groovy

- Scala

- PHP

- Python

- TCL

- Perl

Tools

- Maven

- Visual VM

- Gitstat

- StatSVN

- KeepFocussed

- Cobertura

- KDiff3

- Portable Apps

- jSimpleX

Repository

- Git

- TortoiseGit

- Git Gui

- Svn

- Hg

Data

- SQLite

- SquirrlDB – a jdbc sql gui

- DBeaver – another jdbc SQL gui

Network

- MobaXTerm

- VNC

- Fiddler

- Putty

- Wget

Servers

- Apache

- Tomcat

- Jetty

- HFS

- NGinx

- NodeJS

- JBoss

- Jenkins

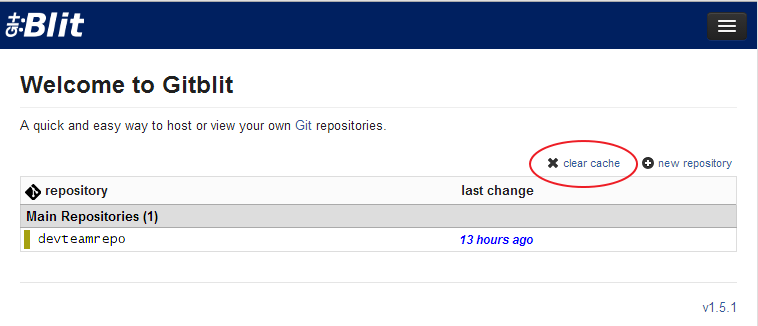

- Gitblit

Project Management

- Fossil

- Kanboard

Browsers

- Opera

- Chrome

- IE

- Firefox

- Safari

Office

- Microsoft Office

- Microsoft Outlook

Notes

- I always zip up my IDE setups for backup and quick sharing/replication if needed. Also, I find it best to work on a project with its own IDE especially with Eclipse. There’s always a different team with different preferences, so its almost unavoidable.

- I always portablize my JDKs. Can’t stand it when a JDK has to be “installed” and I have no admin rights on my Win 7 machine now anyway. Lot’s of pathing. I have everything set up in both operating systems so I can use paths to solve problems. None of this installation stuff if possible. Even Linux distros are getting too “instally” for me these days.

I do not use the Spring Eclipse IDE or any other monster-plugin collections. I prefer as slim an IDE as possible. That said I use these in Eclipse, and install like ones as needed in IntelliJ.

- M2E for maven

- EGit

- Anything SVN — the connectors for this are still a pain in the backside though

- Eclemma/Cobertura

- MoreUnit

- FindBugs

- CheckStyle

The point is to be able to write, generate, and test code and do analytics on it as fast as possible. Also, I prefer external servers for debugging vs. the internal Maven-pom style server plugins.

More configure = good.

Vanilla from box = good.

Ciao for now.